This article originally appeared on Greater Good and is republished with permission.

Eighteen months ago, Arturo Bejar and some colleagues at Facebook were reviewing photos on the site that users had flagged as inappropriate. They were surprised by the offending content—because it seemed so benign.

“People hugging each other, smiling for the camera, people making goofy faces—I mean, you could look at the photographs and you couldn’t tell at all that there was something that would make somebody upset,” says Bejar, a director of engineering at the social networking site.

Celebrate the new toolkit

Limited time only! Help us celebrate version 2.0 of the Library of Things Toolkit by chipping in $100 and receive an advance print edition.

Arturo Bejar, a Facebook engineer who has been leading its "social reporting" project, speaking at Facebook's second Compassion Research Day on July 11. Photo credit: Jeffrey Gerson/Facebook.

Then, while studying a photo, one of his colleagues realized something: The person who reported the photo was actually in the photo, and the person who posted the photo was their friend on Facebook.

As the team scrolled through other images, they noticed that was true in the vast majority of cases: Most of the issues involved people who knew each other but apparently didn’t know how to resolve a problem between themselves.

Someone would be bothered by a photo of an ex-boyfriend or ex-girlfriend, for instance, or would be upset because he or she didn’t appear in a photo that ostensibly showed a friend’s “besties.” Often people didn’t like that their kids were in a photo a relative had uploaded. And sometimes they just didn’t like the way they looked.

Facebook didn’t have foolproof ways to identify or analyze these problems, let alone resolve them. And that made Bejar and his colleagues feel like they weren’t adequately serving the Facebook community—a concern amplified by the site’s exponential growth and worries about cyberbullying among its youngest users.

“When you want to support a community of a billion people,” says Bejar, “you want to make sure that those connections over time are good and positive and real.”

A daunting mission, but it’s one that Bejar has been leading at Facebook, in collaboration with a team of researchers from Yale University and UC Berkeley, including scientists from the Greater Good Science Center. Together, they’re drawing on insights from neuroscience and psychology to try to make Facebook feel like a safer, more benevolent place for adults and kids alike—and even help users resolve conflicts online that they haven’t been able to tackle offline.

“Essentially, the problem is that Facebook, just like any other social context in everyday life, is a place where people can have conflict,” says Paul Piff, a postdoctoral psychology researcher at UC Berkeley who is working on the project, “and we want to build tools to enable people who use Facebook to interact with each other in a kinder, more compassionate way.”

Facebook as relationship counselor

For users troubled by a photo, Facebook provides the option to click a Report link, which takes them through a sequence of screens where they can elaborate on the problem, called the “reporting flow.”

Up until a few months ago, the flow presented all “reporters” with the same options for resolving the problem, regardless of what that problem actually was; those resolutions included unfriending the user or blocking him or her from ever making contact again on Facebook.

“One thing that we learned is that if you give someone the tool to block, that’s actually not in many cases the right solution because that ends the conversation and doesn’t necessarily resolve anything—you just sort of turn a blind eye to it,” says Jacob Brill, a product manager on Facebook’s Site Integrity and Support Engineering team, which tries to fix problems users are experiencing on the site, from account fraud to offensive content.

Jacob Brill, a Facebook product manager, presenting some of his social reporting team's preliminary findings at the second Compassion Research Day. Photo credit: Jeffrey Gerson/Facebook.

Instead, Brill’s team concluded that a better option would be to facilitate conversations between a person reporting content and the user who uploaded the content, a system that they call “social reporting.”

“I really think that was key—that the best way to resolve conflict on Facebook is not to have Facebook step in, but to give people tools to actually problem-solve themselves,” says Piff. “It’s like going to a relationship counselor to resolve relationship conflict: Relationship counselors are there to give couples tools to resolve conflict with each other.”

To help Facebook develop those tools, Bejar turned to Piff and two of his UC Berkeley colleagues, social psychologist Dacher Keltner and neuroscientist Emiliana Simon-Thomas—the GGSC’s faculty director and science director, respectively—all of whom are experts in the psychology of emotion.

“It felt like we could sharpen their communication,” says Keltner, “just to make it smarter emotionally, giving kids and adults sharper language to report on the complexities of what they were feeling.”

The old reporting flow wasn’t very emotionally intelligent. When first identifying the problem to Facebook, users had some basic options: They could select “I don’t like this photo of me,” claim that the photo was harassing them or a friend, or say that it violated one of the site’s Community Standards—for hate speech or drug use or violence or some other offense. Then they could unfriend or block the other user, or send that user a message.

Initially, users had to craft that message themselves, and only 20 percent of them actually sent a message. To boost that rate, Facebook provided some generic default text—“Hey I don’t like this photo. Please remove it.”—which raised the send rate to 51 percent. But often users would send one of these messages and never hear back, and the photo wouldn’t get deleted.

Bejar, Brill, and others at Facebook thought they could do better. The Berkeley research team believed this flow was missing an important step: the opportunity for users to identify and convey their emotions. That would guard against the fact that it’s easier for people online to be insensitive or even oblivious to how their actions affect others.

“If you get someone to express more productively how they’re feeling, that’s going to allow someone else to better understand those feelings, and try to address their needs,” says Piff. “There are some very simple things we can do to give rise to more productive interpersonal interactions.”

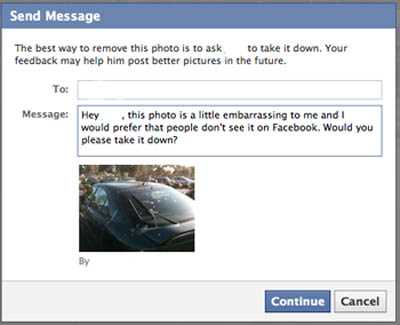

Instead of simply having users click “I don’t like this photo,” for instance, the team decided to prompt users with the sentence “I don’t like this photo because:”, which they could complete with emotion-laden phrases, such as “It’s embarrassing” or “It makes me sad” (see screenshot at left). People reporting photos selected one of these options 78 percent of the time, suggesting that the list of phrases effectively captured what they were feeling.

People were then taken to a screen telling them that the best way to remove the photo was to ask the other user to take it down—blocking or unfriending were no longer presented as options—and they were given more emotionally intelligent text for a message they could send through Facebook, tailored to the particular situation.

The emotionally intelligent message UC Berkeley researchers developed for Facebook "reporters." (User's name has been erased.) Courtesy of Facebook.

That text included the other person’s name, asked him or her more politely to remove the content (“would you please take it down?” vs. the old “please remove it”), and specified why the user didn’t like the photo, emphasizing their emotional reaction and point of view—but still keeping a light touch. For example, photos that made someone embarrassed are described as “a little embarrassing to me.” (See the screenshot at left for an example.)

It worked. Roughly 75 to 80 percent of people in the new, emotionally intelligent flow sent these default messages without revising or editing the text, a 50 percent increase from the number who sent the old, impersonal message.

When Keltner and his team presented these findings at Facebook’s second Compassion Research Day, a public event held on Facebook’s campus earlier this month, he emphasized that what mattered wasn’t just that more users were sending messages but that they were enjoying a more positive overall experience.

“There are a lot of data that show when I feel stressed out, mortified, or embarrassed by something happening on Facebook, that activates old parts of the brain, like the amygdala,” Keltner told the crowd. “And the minute I put that into words, in precise terms, the prefrontal cortex takes over and quiets the stress-related physiology.”

Preliminary data seem to back this up. Among the users who sent a message through this new flow, roughly half said they felt positively about the other person (called the “content creator”) after they sent him or her the message; less than 20 percent said they felt negatively. (The team is still collecting and analyzing data on how users feel before they send the messages, and on how positively they felt after sending a message through the old flow.)

In this new social reporting system, half of content creators deleted the offending photo after they received the request to remove it, whereas only a third deleted the photo under the old system. Perhaps more importantly, roughly 75 percent of the content creators replied to the messages they received, using new default text that the researchers crafted for them. That’s a nearly 50 percent increase from the number who replied to the old messages.

“The right resolution isn’t necessarily for the photo to be taken down if in fact it’s really important to the person who uploaded it,” says Brill. “What’s really important is that you guys are talking about that, and that there is a dialogue going back and forth.”

This post is a problem

That’s all well and good for Facebook’s adult users, but kids on Facebook often need more. For them, Facebook’s hazards include cyberbullying from peers and manipulation by adult predators. Rough estimates indicate that more than half of kids have had someone say mean or hurtful things to them online.

Previously, if kids felt hurt or threatened by someone on Facebook, they could click the same Report link adults saw, which took them through a similar flow, asking if they or friends were being “harassed.” From there, Facebook gave them the option to block or unfriend that person and send him or her a message, while also suggesting that they contact an adult who could help.

Yale developmental psychologist Marc Brackett discussing his collaboration with Facebook at the second Compassion Research Day. Photo credit: Jeffrey Gerson/Facebook.

But after hearing Yale developmental psychologist Marc Brackett speak at the first Compassion Research Day in December of 2011, Bejar and his colleagues realized that the old flows failed to acknowledge the particular emotions that these kids were experiencing. That oversight might have made the kids less likely to engage in the reporting process and contact a supportive adult for guidance.

“The way you really address this,” Bejar said at the second Compassion Research Day, “is not by taking a piece of content away and slapping somebody’s hand, but by creating an environment in which children feel supported.”

To do that, he enlisted Brackett and two of his colleagues, Robin Stern and Andres Richner. The research team organized focus groups with 13-to-14-year-old kids, the youngest age officially allowed on Facebook, and interviewed kids who’d experienced cyberbullying. The team wanted to create tools that were developmentally appropriate to different age ranges, and they decided to target this youngest group first, then work their way up.

From talking with these adolescents, they pinpointed some of the problems with the language Facebook was using. For instance, says Brackett, some kids thought that clicking “Report” meant that the police would be called, and many didn’t feel that “harassed” accurately described what they had been experiencing.

Instead, Brackett and his team replaced “Report” with language that felt more informal: “This post is a problem.”

They tried to apply similar changes across the board, refining language to make it more age-appropriate. Instead of simply asking kids whether they felt harassed, they enabled kids to choose among far more nuanced reasons for reporting content, including that someone “said mean things to me or about me” or “threatened to hurt me” or “posted something that I just don’t like.” They also asked kids to identify how the content made them feel, selecting from a list of options.

Depending on the problem they identified, the new flows gave kids more customized options for the action they could take in response. That included messages they could send to the other person, or to a trusted adult, that featured more emotionally rich and specific language, tailored to the type of situation they were reporting.

“We wanted to make sure that they didn’t feel isolated and alone—that they would receive support in a way that would help them reach out to adults who could provide them with the help that they needed,” Brackett said when presenting his team’s work at the second Compassion Research Day.

After testing these new flows over two months, the team made some noteworthy discoveries. One surprise was that, when kids reported problems that they were experiencing themselves, 53 percent of those problems concerned posts that they “didn’t like,” whereas only three percent of the posts were seen as threatening.

“The big takeaway here is that … a lot of the cases are interpersonal conflicts that are really best resolved either between people or with a trusted adult just giving you a couple of pointers,” Jacob Brill said at the recent Compassion Research Day. “So we’re giving [kids] the language and the resources to help with a situation.”

And those resources do seem to be working: 43 percent of kids who used these new flows reached out to a trusted adult when reporting a problem, whereas only 19 percent did so with the old flows.

“The new experience that we’re providing is empowering kids to reach out to someone they trust to get the help that they need,” says Brackett. “There’s nothing more gratifying than being able to help the most amount of kids in the quickest way possible.”

Social reporting 2.0

Everyone involved in the project stresses that it’s still in its very early stages. So far, it has only targeted English-language Facebook users in the United States. Brackett’s team’s work has only focused on 13 to 14 year olds, and the new flows developed by the Berkeley team were only piloted on 50 percent of Facebook users, randomly selected.

The GGSC's Emiliana Simon-Thomas discusses her work with Facebook at the second compassion research day. Photo credit: Jeffrey Gerson/Facebook.

Can they build a more emotionally intelligent form of social reporting that works for different cultures and age groups?

“Our mission at Facebook is to do just that,” says Brill. “We will continue to figure out how to make this work for anyone who has experiences on Facebook.”

The teams are already working to improve upon the results they presented at the second Compassion Research Day. Brackett says he believes they can encourage even more kids on Facebook to reach out to trusted adults, and he’s eager to start helping older age groups. He’s excited by the potential impact.

“When we do our work in schools, it’s one district, one school, one classroom at a time,” he says. “Here, we have the opportunity to reach tens of thousands of kids.”

And that reach carries exciting scientific implications for the researchers.

“We’re going to be the ones who get to go in and have 500,000 data points,” says Simon-Thomas. “It’s beyond imagination for a research lab to get that kind of data, and it really taps into the questions we’re interested in: How does conveying your emotion influence social dynamics in rich and interesting ways? Does it facilitate cooperation and understanding?”

And what’s in it for Facebook?

Bejar, the father of two young children, says that protecting kids and strengthening connections between Facebook users makes the site more self-sustaining in the long run. The project will have succeeded, he says, if it encourages more users simply to think twice before posting a photo that might embarrass a friend or to notify that friend when they post a questionable image.

“It’s those kinds of kind, compassionate interactions,” he says, “that help build a sustainable community.”